|

Planner integrates quite well with Gnome thanks to the GTK libraries, but it is true that Microsoft Project's new formats do not recognize it well. In addition, Planner allows you to export the plans or parts of the tasks in pdf format, which further facilitates the dissemination of projects and planners. Planner uses xml and PostreSQL files to work, allowing you to use or export Microsoft Project files. Even so, its way of working keeps the program as one of the most used. Planner is the oldest alternative to Microsoft Project out there and also the one that has not been updated the longest.

OpenProject can be achieved through your official page. And for those who don't, they always have the free version. Attractive functions for companies and entrepreneurs that need a great performance. The paid version contains all the above functions but also incorporates features such as the insertion of a personalized logo in the program, a cloud application, services such as messaging, etc. The free version covers basic needs including support for Microsoft Project files, gantt charts, software project planning, includes the scrum development system and a long list of functions. This application has two versions: one free and one paid.

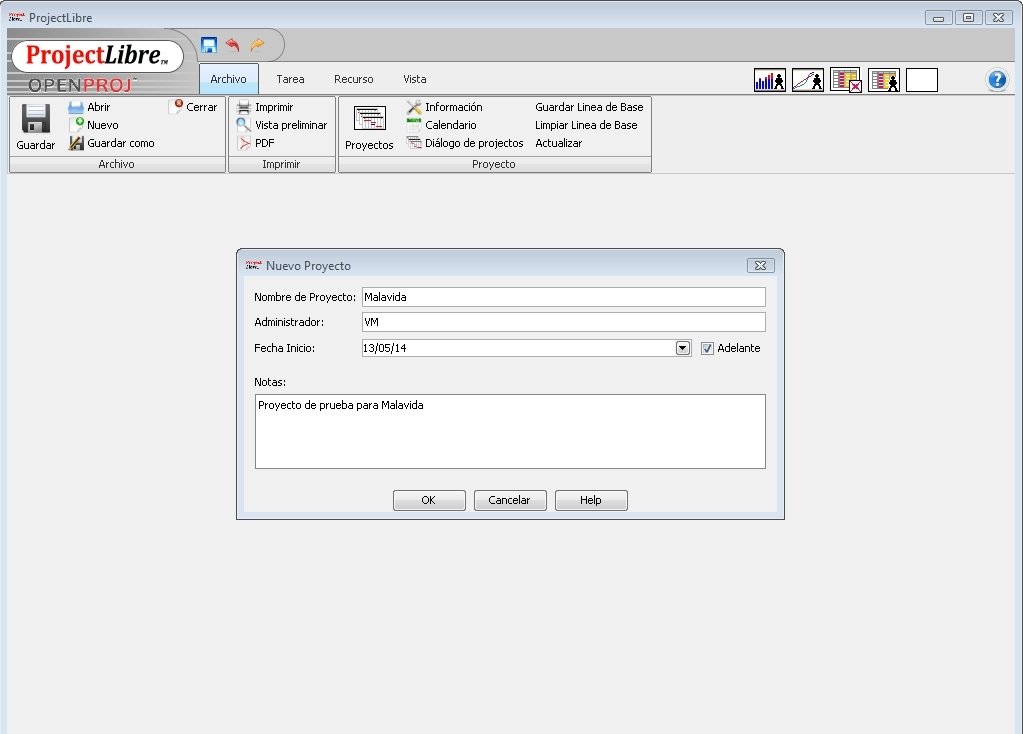

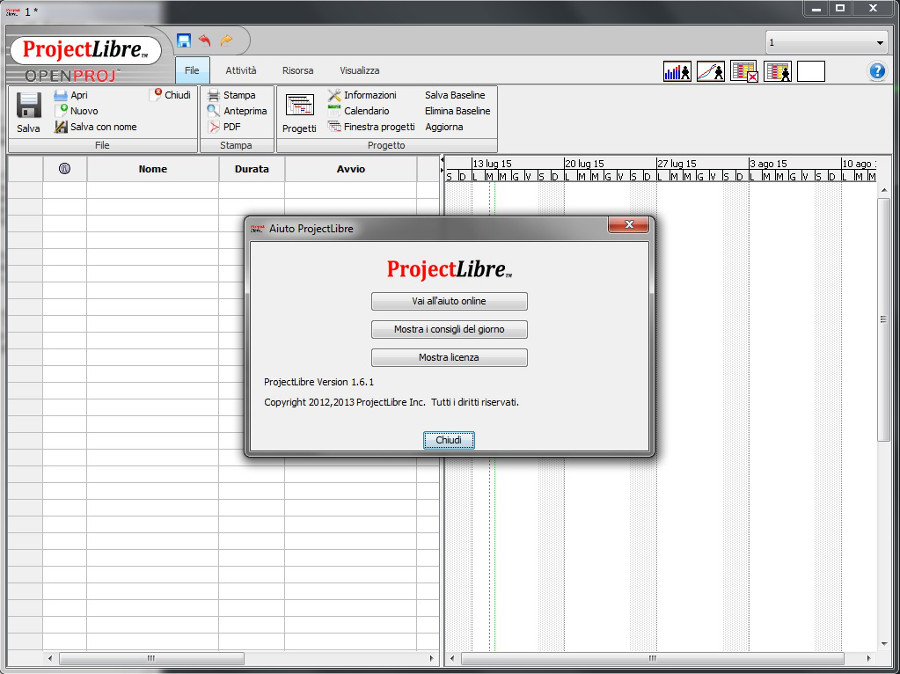

ProjectLibre we can get it from your Sourceforge page for free. ProjectLibre has a great difference compared to the rest of the programs and that is that there is great documentation about the application in several languages, documentation that we can consult and disseminate among the company if it is the area we want to reach. It also allows the use and insertion of Gantt Charts, histograms of resources, diary and planning of software projects or network diagrams among others. ProjectLibre is a application compatible with Microsoft Project 2003, 20 files. ProjectLibre is a fork of the OpenProject program, two applications that try to imitate or replace Microsoft Project.

0 Comments

Date unknown (I don't have a copy of this version. However, it isn't mentioned anywhere in The Making of PopCap's PvZ) Zombies!(?)/Lawn of the Dead 0.95? - Date unknown (I believe that 0.95 exists, since the version numbers are probably incremental. Plants Vs Zombies 2 PLAY GAME IN FULLSIZE RELOAD GAME 3. Zombies!(?)/Lawn of the Dead 0.94 - (The pictures in the PowerPoint only shows "Lawn of the Dead" (from the title bar), however, the product name has changed to PvZ since 0.93, so I assume that PvZ is the actual name of this build) This wacky plant-on-zombie conflict will take you to the outer edges of Neighborville and back again. Zombies!/Lawn of the Dead 0.93 - Date unknown

Date unknown (I dont have a copy of this version. Lawn of the Dead 0.92 - Halloween 2007 (probably ) - Date unknown RTM (release to manufacturing) Plants vs.Zombies is a Flash-powered game that can no longer be played due to discontinuation of Flash. Youll need to think fast and plant faster to stop 5 different types of zombies dead in their tracks. Zombies, youre armed with 11 zombie-zapping plants like peashooters and cherry bombs. Zombies 1.0 - (according to the note in the PowerPoint) Plants vs Zombies is a real-time strategy / tower defense game, developed by Popcap, in which you will have to protect your garden against invading un. In this free online version of Plants vs.

These business on the map are authorized sellers, not service centers or places that are soley Fitbit repair shops. If you’re in a hurry, you can charge the earbuds in the case for 15 minutes to get up to get about 1.5 hours of listening time. A Fitbit Repair Shop Actually Means Authorzied Seller… The included charging case stores about 2 additional charges, so you can get up to 24 hours of listening time before you need to connect to a USB wall charger. The earbuds can last up to 8 hours before needing to be recharged. Outdoors, the signal can be more susceptible to interference and I noticed that the left and right earbuds would get out of sync momentarily, but this was very infrequent and typically only happened when I was handling my phone (where my hand was likely blocking the Bluetooth signal). While using the earbuds indoors, I did not notice any issues with the earbuds’ ability to connect to each other and the sound came through both earbuds fine. So if you carry your phone in your left pocket, you’ll want to use the BackBeat app to make the left earbud the primary. Ideally, you’ll want the primary earbud to be on the same side that you normally carry your phone. However, unlike many other truly wireless earbuds, you can actually switch the primary earbud. A single tap increases the volume while a long tap decreases the volume, which for some reason is tough for me to remember.įrom the factory, the right earbud will serve as the primary earbuds. On earbud controls: You can adjust the volume by tapping on the left earbud, but it’s a little awkward.However, they are not completely waterproof and should not be submerged in liquid.

IP57 Sweat-proof: I haven’t noticed any issues with these earbuds and my copious amounts of sweat.As such, these may not be ideal if you plan on wearing them in loud locations such as airplanes and trains.

Tensions between Pugh and Wilde have, like the love triangle between Styles, Wilde, and Sudeikis, threatened to eclipsed the movie itself. There have also been rumors that Pugh didn’t care for Wilde and Styles’s relationship. And in one of the rare moments when she has spoken about the movie, Pugh grumbled at the idea of her performance being reduced to sex scenes. While Wilde has been effusive about Pugh, Pugh has not been promoting this movie or returning Wilde’s warmth. Meanwhile, Pugh, the star of Don’t Worry, hasn’t been on the same page as her director. That timing would explain why Sudeikis served Wilde custody papers during a huge promotional stop for the movie. Speculation is that Wilde and Styles may have had an affair on set. While filming, Wilde split with her longtime partner Jason Sudeikis and began dating the lead of Don’t Worry, pop star Harry Styles.

Much of the drama comes back to director Olivia Wilde, who among other things told Variety that only women have orgasms in the film. Now that I’ve experienced the Don’t Worry Darling press tour, I wish I could go back in time and live in a world where I didn’t. I couldn’t tell you the plot (something about ’50s housewives?) but know all too much about the supposed behind-the-scenes drama, from the interviews to the non-interviews, the snubs and the Shia and the spit takes. Personally, everything I’ve learned about the feature film - which stars Florence Pugh and should use a comma after “worry” in its title - has been against my will. And some people have the Don’t Worry Darling press tour.

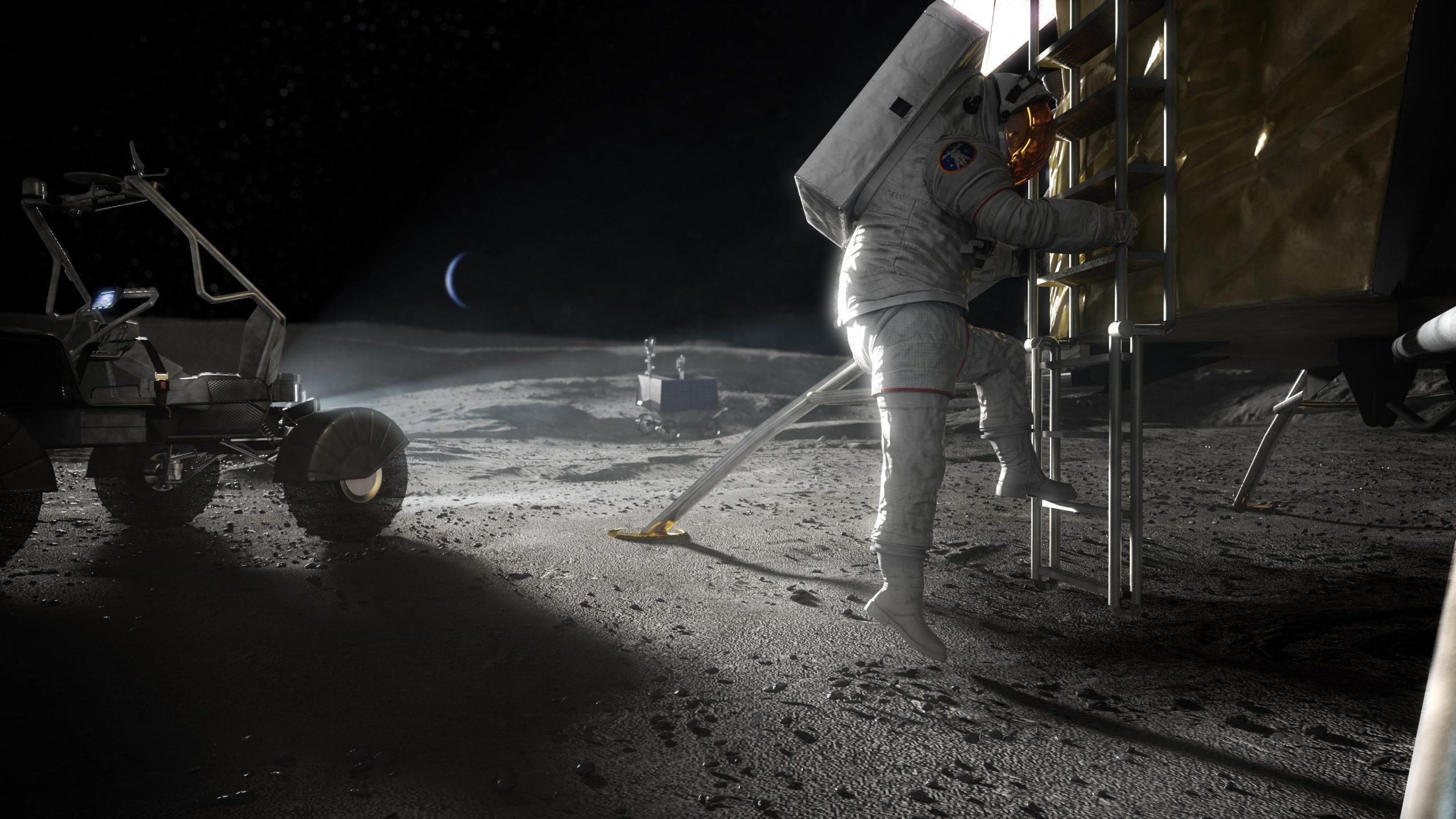

"Before that, we have a number of test launches and landings that we'll be we'll be releasing here soon." "We'll be testing our full lander systems and the full architecture prior to any astronauts entering the vehicle and that will be roughly one year prior," John Couluris, Blue Origin vice president of lunar transportation, said Friday. A substantial redesign to accommodate more cargo and crew has been added since then.

Once Orion docks with Gateway, two astronauts will transfer to Blue Origin’s human landing system for about a weeklong trip to the moon’s south pole region where they will conduct science and exploration activities," NASA said in a statement.īlue Origin, owned and personally funded by billionaire Jeff Bezos, the founder of Amazon and the world's richest person, initially debuted plans for its Blue Moon lander in 2019. "For the Artemis V mission, NASA’s Space Launch System rocket will launch four astronauts to lunar orbit aboard the Orion spacecraft. International Space Station: NASA, international partners agree on cooperation on the ISS through 2028īlue Origin's reusable lander called "Blue Moon" was selected deliver astronauts to the lunar surface for the agency's Artemis V mission slated for no earlier than 2029. "An additional, different lander will help ensure that we have the hardware necessary for a series of landings to carry out the science and technology development on the surface of the moon," said Bill Nelson, NASA administrator, during Friday's announcement. − Blue Origin, along with partners Lockheed Martin, Draper, Boeing, Astrobotic, and Honeybee Robotics, was announced Friday as the second company selected by NASA to develop a human lunar lander system for Artemis missions to the moon.īlue Origin joins SpaceX and its Starship vehicle as the only other company selected to develop spacecraft to carry Artemis astronauts to the surface of the moon in an effort that NASA calls "Sustaining Lunar Development." Blue Origin's NASA contract is valued at $3.4 billion. His technologic advances included many concepts taken for granted today, including custom immobilization of patients, beam hardening with metallic filters to achieve higher photon energies, and collimation/shaping of beams. Coutard was among the first to recognize that different cancer histologies and locations carried distinct probabilities for radiocurability. This occurred during a time when dosimetry was extremely crude and unreliable. His methodical nature and keen observational skills led him to customize treatment intensities based on the levels of radiotherapy-induced skin desquamation and oral mucositis. Using this strategy, he was able to cure patients with a variety of head and neck malignancies and to popularize this concept of fractionation in the international community ( 2, 13– 15). In the 1920s, Coutard applied the concept of fractionated XRT with treatment courses protracted over several weeks. Henri Coutard joined Regaud at the Radium Institute of Paris, where he operated a basement X-ray unit. The Standard Chemical Company began commercially marketing radium from Colorado mines by 1913, and this “wonder drug” subsequently found its way into many products and applications. By 1904, patients in New York were undergoing implantation of radium tubes directly into tumors, representing some of the first interstitial brachytherapy treatments. By 1902, radium had been used successfully treat a pharyngeal carcinoma in Vienna. The notion of using radioactive elements to treat cancer probably dates back to 1901, when Becquerel experienced a severe skin burn while accidentally carrying a tube of radium in his vest pocket for 14 continuous days. Shortly thereafter, Marie and Pierre Curie discovered radium and polonium their stories have been nicely chronicled ( 7– 9). Antoine-Henri Becquerel, a physics professor in Paris, was the first to recognize natural radioactivity while working with uranium salts. The field grew rapidly through the last years of the 19th century and into the first years of the 20th ( 3). Aside from famous cases such as these, however, most tumors around this time could not be cured without extensive normal tissue damage, given the low energies (and hence limited depths of penetration) of these early X-rays. The first deep-lying tumor to be eradicated by X-rays was probably a malignant sarcoma of the abdomen, treated over 1 1/2 years in New Haven by Clarence Skinner. Palliation of painful tumors was reported as early as 1900 ( 6). Thor Stenbeck and Tage Sjogen of Sweden reported successes with treating skin cancers by 1899. Émil Grubbé, a medical student in Chicago at the time, would later claim to have been the first to treat cancer patients with X-rays in 1896 ( 5). Only 7 months after Roentgen's discovery, however, a 1896 issue of the Medical Record described a patient with gastric carcinoma who had benefited from radiotherapy delivered by Victor Despeignes in France. The very earliest X-ray treatments were for benign conditions like eczema and lupus. The first therapeutic uses of X-rays in cancer quickly followed this initial discovery ( 3, 4).

Bringing these laboratory discoveries and techniques to the clinic is the key challenge. There is now a tremendous body of knowledge about cancer biology and how radiation affects human tissue on the cellular level. The radiobiological discoveries over the past century have likewise been revolutionary. Perhaps the most important of these developments has been the paradigm of fractionated dose delivery, technologic advances in X-ray production and delivery, improvements in imaging and computer-based treatment planning, and evolving models that predict how cancers behave and how they should be approached therapeutically. These initial efforts stimulated a revolution of conceptual and technological innovations throughout the 20th century, forming the basis of the safe and effective therapies used today. The use of ionizing radiation for the treatment of cancer dates back to the late 19th century, remarkably soon after Roentgen described X-rays in 1895 and the use of brachytherapy after Marie and Pierre Curie discovered radium in 1898.

If you are looking to get into portrait photography, a reflector is an excellent first purchase that won’t break the bank. In a studio setting, reflectors are often used as fill lights, bouncing back the spill from a key light in order to lower the lighting ratio on a subject. With a reflector you can change the angle of the shadows and give some control to the contrasts you are creating. Since the light from a reflector is directable, it gives more options when working outdoors where previously you would have had to rely only on the angle of the sun. That means we can bounce artificial light off a reflector to get a nicer quality of light, or we can use them outdoors to bounce sunlight back at a subject. Village Dearing is a city in Montgomery County, Kansas, United States. It has many uses because bouncing light gives it a much softer look. Photo Map: Tap on the map to travel: Fawn Creek Township. This piece of material usually contains a frame that keeps it taught enough to angle in a specific direction and therefore control the direction of the bounced light. 60cm 5-in-1 Portable Collapsible Photography Reflector/Light Photo Reflector. Most of the time when people refer to using a reflector, they are talking about using a piece of reflective material to bounce light in a certain direction. 30 photography reflectors are ideal for still life photography and allow the usage of bigger subjects with maximum results. The 5-in-1 Collapsible Reflector is versatile in the field and in the. When it comes to photographic lingo, a reflector actually refers to two distinct pieces of equipment.

In the course of offering both the Extraction APIs and Crawlbot, our machine learning algorithms analyzed over 1 Billion URLs each month, and we used the fraction of a penny we earned on each of these calls to build a top-tier research team to improve the accuracy of these machine learning models. We quickly attracted customers like Amazon, Walmart, Yandex, and major market news aggregators. Rather than passing in individual URLs, Crawlbot enabled our customers to ask questions like “let me know about all price changes across Target, Macys, JCrew, GAP, and 100 other retailers” or “let me build a news aggregator for my industry vertical”. Crawlbot worked as a cloud-managed search engine, crawling entire domains, feeding the urls into our automatic analysis technology, and returning entire structured databases.Ĭrawlbot allowed us to grow the market a bit beyond an individual developer tool to businesses that were interested in market intelligence, whether about products, news aggregation, online discussion, or their own properties. We productized this as a product called Crawlbot, which allowed our customers to create their own custom crawls of sites, by providing a set of domains to crawl.

We integrated the Gigablast technology into Diffbot, essentially adding a highly optimized web rendering engine and our automatic classification and extraction technology to Gigablast’s spidering, storage, search, and indexing technology. Fortunately for us, this meant that we did not have to expend significant resources in learning how to operate a production crawl of the web, and could focus on the task of making meaning out of the web pages. His team had written over half a million lines of C++ code to work out many of the edge-cases required to crawl the 99.999% of the web. Matt had competed against Google in the first search wars in the mid-2000s (remember when there were multiple search engines?), had achieved a comparably-sized web index, with real-time search, with a much smaller team and hardware infrastructure. Our next big break came when we met Matt Wells, the founder of the Gigablast search engine, who we hired as our VP of Search. This niche market (the set of software developers that have a bunch of URLs to analyze) provided us a proving grounds for our technology and allowed us to build a profitable company around advancing the state-of-the-art in automated information extraction. Diffbot quickly powered apps like AOL, Instapaper, Snapchat, DuckDuckGo, and Bing, who used Diffbot to turn their URLs into structured information about articles, products, images, and discussion entities. For many kinds of web applications automatically extracting structure from arbitrary URLs works 10X better compared to the approach of manually creating scraping rules for each site and maintaining these rulesets.

We launched this as a paid API on Hacker News for developers, which meant that the only way we would survive was if the technology provided something of value that was better than what could be produced in-house or by off-the-shelf solutions. We started perfecting the technology to automatically render and extract structured data from a single page, starting with article pages, and moving on to all the major kinds of pages on the web. So, we just decided to start developing the technology anyways, but without crawling the web. Bing was spending upwards of $1B per quarter to maintain a fast-follower position.Īs a bootstrapped startup starting out at this time, we didn’t have the resources to crawl the whole web nor were we willing to burn a large amount of investors’ money before proving the technology to ourselves. Even Yahoo eventually got out of the web crawling business, effectively outsourcing their crawl to Bing. However they were never able to build technology that is 10X better before resources ran out. Many of those startups in the late-2000s all raised large amounts of money with no more than an idea and a team to try to build a better Google. Crawling the web is capital intensive stuff, and many a well-funded startup and large company have gone bust trying to do so.

However, as a small startup, we couldn’t crawl the web on day one. We believe that the only approach that can scale and make use of all of human knowledge is an autonomous system that can read and understand all of the documents on the public web. Our mission at Diffbot is to build the world’s first comprehensive map of human knowledge, which we call the Diffbot Knowledge Graph.

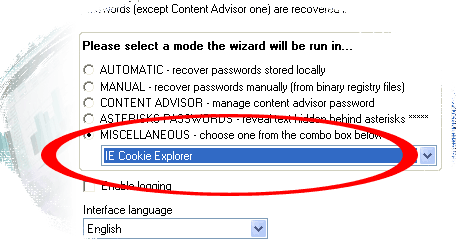

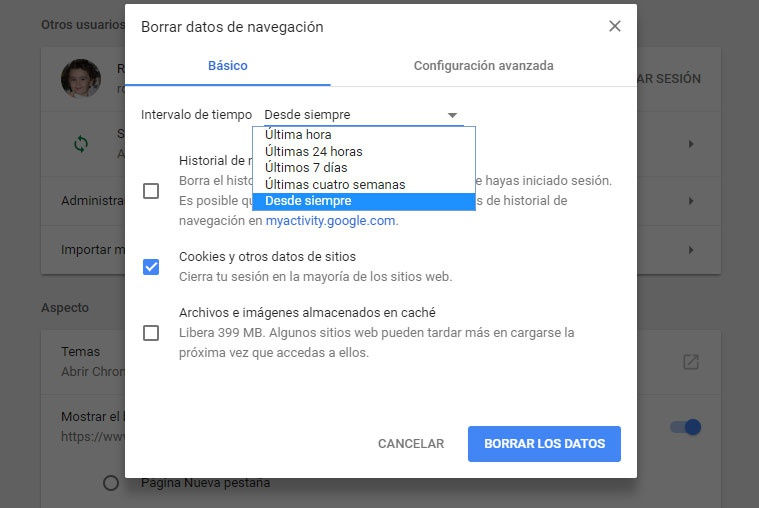

You can easily select one or more items from the cache list, and then extract the files to another folder, or Last fetched time, Expiration time, Fetch count, Server name, and more. MZCacheView is a small utility that reads the cache folder of Firefox/Mozilla/Netscape Web browsers,Īnd displays the list of all files currently stored in the cache.įor each cache file, the following information is displayed: URL, Content type, File size, Last modified time, You can also easily export the history data to text/HTML/Xml file. URL, First visit date, Last visit date, Visit counter, Referrer, Title, and Host name. Of Firefox/Mozilla/Netscape Web browsers, and displays the list of all visited Web pagesįor each visited Web page, the following information is displayed: MZHistoryView is a small utility that reads the history data file (history.dat) Text, HTML or XML file, delete unwanted cookies, and backup/restore the cookies file.

The cookies file (cookies.txt) in one table, and allows you to save the cookies list into It displays the details of all cookies stored inside MZCookiesView is an alternative to the standard 'Cookie Manager' provided by Paste it to another application, like Excel or OpenOffice Spreadsheet. You can easily save the cache information into text/html/xml file, or copy the cache table to the clipboard and then IECacheView is a small utility that reads the cache folder of Internet Explorer, and displays the list of all files currently stored in the cache.įor each cache file, the following information is displayed: Filename, Content Type, URL, Last Accessed Time, Last Modified Time,Įxpiration Time, Number Of Hits, File Size, Folder Name, and full path of the cache filename. In addition, you are allowed to view the visited URL list of other user profiles on your computer,Īnd even access the visited URL list on a remote computer, as long as you have permission Select one or more URL addresses, and then remove them from the history file or save them into The list of all URLs that you have visited in the last few days. This utility reads all information from the history file on your computer, and displays In addition, it allows you to change the content of the cookies, delete unwanted cookies files, save the cookies into a readable text file,įind cookies by specifying the domain name, view the cookies of other users and in other computers, and more. This utility displays the details of all cookies that Internet Explorer stores on your computer. You can also export the browsing history into csv/tab-delimited/html/xml file from the user interface, or from command-line, without displaying any user interface. BrowsingHistoryView allows you to watch the browsing history of all user profiles in a running system, as well as to get the browsing history from external hard drive.

The browsing history table includes the following information: Visited URL, Title, Visit Time, Visit Count, Web browser and User Profile. If you want to download a package of all the tools listed below in one zip file, click hereīrowsingHistoryView is a utility that reads the history data of 4 different Web browsers (Internet Explorer, Mozilla Firefox, Google Chrome, and Safari) and displays the browsing history of all these Web browsers in one table. Mozilla browsers (including Firefox) that extract cookies, history data and cache information from In the following section, you can find unique Web browser tools for both Internet Explorer and

I tested the software rendering, and though it is quite CPU-hungry, it runs without hang-ups or visual artifacts. The system requirements state that a 3-D capable video card is required if available Google Earth will use OpenGL to render its graphics, but if not, it will attempt to use software rendering. desktop menu entries for GNOME and KDE, but I installed it on three machines, and the menu entry failed to appear in any of them. Click to enlargeīy default, Google Earth installs in /usr/local/google-earth/ and creates a symbolic link in /usr/local/bin. System requirements are a 2.6-series kernel, glibc 2.3.5, 512MB of RAM, and an active network connection (necessary because the Google Earth client retrieves its visualization data dynamically from a server).

bin file, an executable shell script wrapped around a self-extracting installer. It was possible before to run previous versions of Google Earth under Wine, but the Google Earth 4 Beta is a native application built on Qt and OpenGL. The release notes indicate simplifications to the user interface, support for textures on 3-D models, and the addition of new features to Keyhole Markup Language (KML), Google Earth’s data exchange format.įor most Linux users, of course, the biggest news is simply the availability of a native Linux client. The new release is a beta of Google Earth 4, slated to be the next major revision. Following closely on the heels of its Picasa news, Google is offering a beta of Google Earth that - for the first time - includes a Linux version of the 3-D mapping and visualization program. |

RSS Feed

RSS Feed